Mastering Video Content with AI: Transcription, Translation, and Summarization

AI is a powerful tool for automating many day to day tasks and can drastically make employees more productive, getting more done in less time. With the emergence of open source AI solutions like Whisper from Open AI, the advancement of LLMs (large language models), and innovative MLOps (machine learning operations) solutions like cnvrg.io, it has never been easier to incorporate AI into business workflows.

In this guide, we are going to show how easy it is to build a robust AI solution that automatically transcribes, and summarizes video content – even if it is in another language! You’ll get a step by step tutorial of creating a summarization pipeline that transforms any video file, or Youtube Link to text, including translations using the cnvrg.io Platform on Intel Developer Cloud Resources.

This comprehensive guide will give you ideas on how you automate different workflows, and how to incorporate this video summarization AI solution into your day to day work. You’ll learn more about the popular open source solution Whisper, and how to easily apply it into your pipeline with cnvrg.io. With the combined power of Whisper and Large Language Models – you can not only transcribe videos to text but also translate them to a wider audience.

The Power of AI in Video Summarization for your Business

There are many ways that automated video summarization can improve productivity within a business, or within your day to day life. Here are a few ideas that can boost productivity for different lines of business from marketing, to sales, to social media specialists and more.

For marketers, the generated summaries offer a concise and informative snapshot of the content, enabling marketers to identify key messages, extract vital data, and quickly produce copy and descriptions for video streaming platforms, landing pages, and more.

For sales teams, the solution becomes an indispensable tool to understand customer needs and preferences. By summarizing videos containing customer calls, testimonials, product reviews, or industry discussions, sales professionals can quickly extract relevant information, address pain points, and tailor their sales pitches accordingly. The result? Improved communication, enhanced customer engagement, and increased conversion rates.

Content creators, too, can harness the power of this solution to expand their reach and engagement. By transcribing and translating their own video or audio content, they can provide captions or translated versions, catering to a wider audience in a fraction of the time. The generated summaries also serve as a valuable resource to repurpose existing content into written articles or blog posts, amplifying content reach and appeal.

While these are more specific use cases, this solution can be extremely valuable to anyone that wants to know the key messages from a video without having to spend time watching the video. Whether it be to build a meeting summary, quickly learn the takeaways from a webinar, or full movie summaries! Now, let’s learn a bit about how this solution is made possible, and about some of the technology behind it.

What is Whisper API and how does it work?

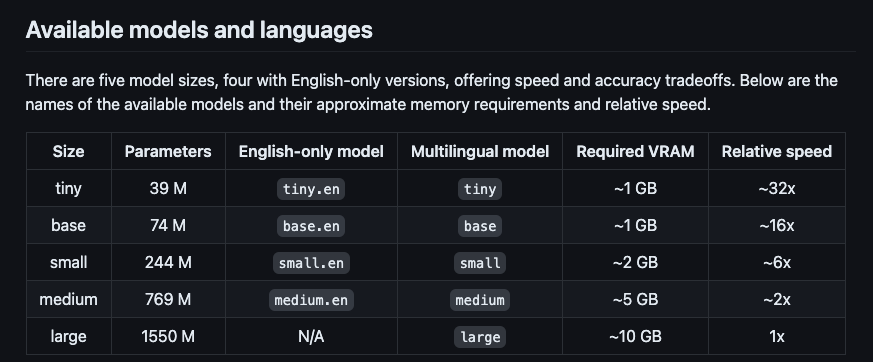

Whisper is a neural network that approaches human-level robustness and accuracy in speech recognition. The Whisper model is fully open source, with all the code publicly available on GitHub. Whisper offers five different model sizes that vary in speed and accuracy tradeoffs relative to the allocated compute power. Hosting the model yourself can be achieved using cnvrg.io’s Metacloud offering, where you can connect any compute that fits your use case, whether that’s on the Intel Developer Cloud or any other cloud provider resources.

Understanding LLMs and ChatGPT for Video Summarization

Once your pipeline effectively transcribes or translates your video, you next can integrate an LLM to summarize the text that was produced by Whisper. While you can use any LLM such as Hugging Face summarization, in this example we are going to use the ChatGPT 3.5 turbo model.

What is an LLM and what does it do?

LLMs, or Large Language Models, are advanced artificial intelligence systems designed to understand and generate human-like text. They possess a vast amount of pre-existing knowledge and are capable of processing and generating language in a coherent and contextually appropriate manner. LLMs employ deep learning techniques to analyze patterns, context, and semantics, allowing them to answer questions, provide explanations, write essays, and engage in conversations. They can assist with language translation, summarize documents, generate creative content, and aid in various natural language processing tasks. LLMs have wide-ranging applications in research, customer service, content creation, virtual assistants, and more, revolutionizing the way we interact with AI technology. In this example, we are using ChatGPT 3.5 to summarize the text that was generated with Whisper from our video.

Leveraging cnvrg.io MLOps Platform for Video Summarization

Building and deploying this video summarization AI solution within cnvrg.io is remarkably simple, putting the power of this cutting-edge technology directly into the hands of businesses and individuals. With cnvrg.io’s user-friendly interface and comprehensive machine learning platform, even those without extensive coding experience can effortlessly construct and implement AI into their workflow. The seamless integration of OpenAI’s Whisper and ChatGPT models, combined with cnvrg.io’s robust infrastructure, ensures a smooth and efficient workflow. Whether you’re a marketer, sales professional, content creator, or researcher, cnvrg.io empowers you to unlock the potential of auto-transcribing, translation, and text summarization into one AI pipeline, bringing a new level of accessibility, efficiency, and innovation to video summarization.

cnvrg.io is an advanced machine learning (ML) platform that provides a comprehensive set of tools and infrastructure to streamline and accelerate the development and deployment of ML models on any infrastructure. It offers a collaborative environment for data scientists, developers, and engineers to collaborate on ML projects, from data preparation to model training and deployment.

At its core, cnvrg.io simplifies the entire ML lifecycle by providing a centralized workspace where users can manage their data, experiment with different algorithms and models, and deploy them seamlessly on any infrastructure whether it be on cloud or on premises. cnvrg.io integrates natively with Intel Developer Cloud, giving you one click access to Intel’s latest cost efficient resources for all AI workloads. It offers features like version control, experiment tracking, and automated hyperparameter tuning to ensure reproducibility and enhance model performance.

How to Build your Own Video Summarization AI Solution with cnvrg.io

Now that you understand how this solution can improve productivity in your business, let’s see how you can build this solution yourself. In this example you will start with a Youtube video, but the Whisper model supports all of the following video formats ( mp3, mp4, mpeg, mpga, m4a, wav, and webm).

Step by Step guide:

In this section we will show you how to easily build your own video summarization AI solution in cnvrg.io using Intel Developer Cloud. In just a few steps you will be able to automate video transcription and summarization in one pipeline, and how to deploy your AI solution. Here is an overview of the steps for building your own automated AI video summarization solution:

- Download the YouTube video as a .mp3 file with Python

- Translate the YouTube video to text using Whisper

- Summarize the video using an LLM (large language model) with the appropriate prompt

- Serving this function as a prediction for inferencing using cnvrg.io on Intel Developer Cloud resources

- (Optional) Add your own dataset to the pipeline or configure a feedback loop to store predictions as a versioned dataset in cnvrg.io

1. Downloading the Youtube video as a .mp3 file with Python

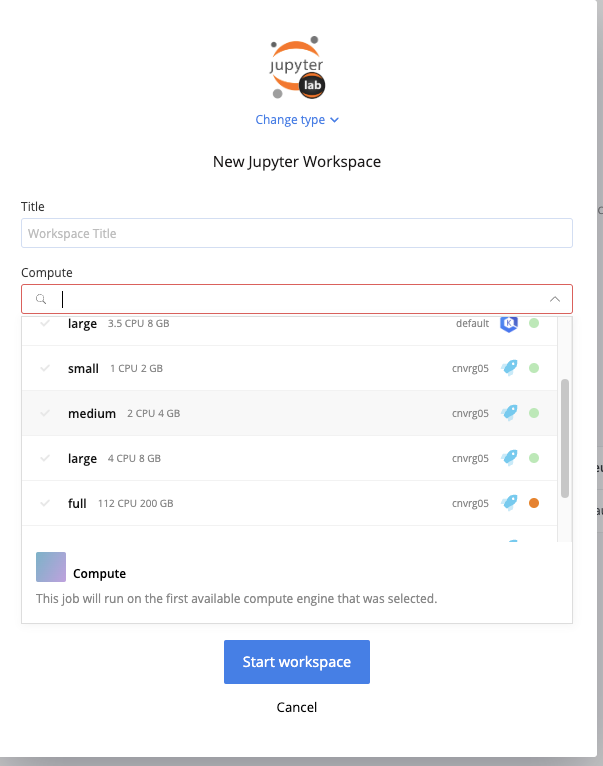

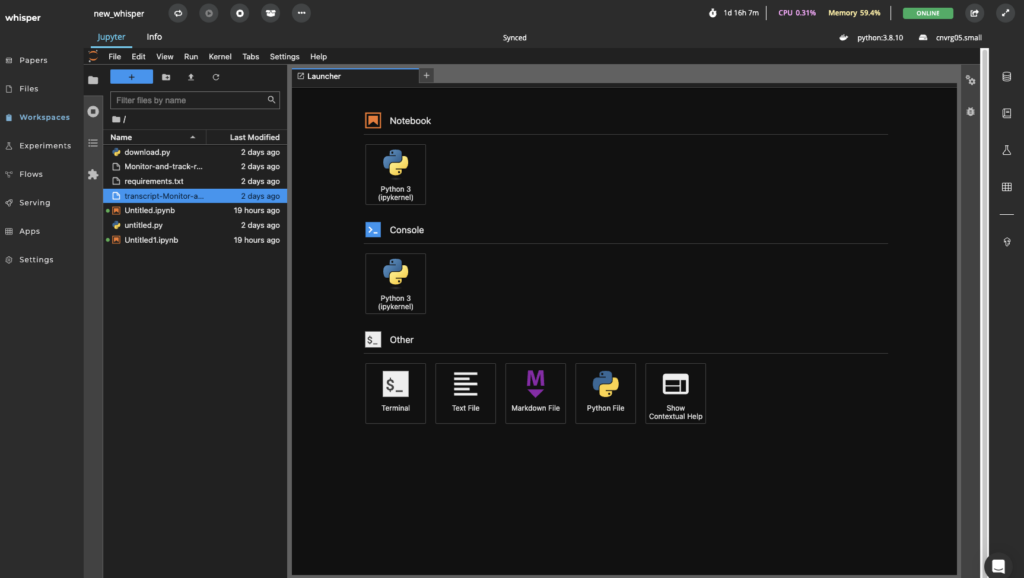

Launch a Workspace on cnvrg.io attaching Intel Developer Cloud Resources

First you will need to sign into your cnvrg.io account. If you don’t already have one, you can request access to cnvrg.io Metacloud here.

Once you are in your cnvrg.io account, you can open a workspace. A cnvrg.io workspace is an interactive environment for developing and running code. A workspace’s automatic version control and scalable compute provide virtually unlimited compute resources to perform your data science research.

Create a python function in the workspace to download the Youtube Link as an .mp3 format

The function we used in this example uses Pytube https://pytube.io/en/latest/

2. How to Use Whisper to Translate a YouTube Video to Text

Whisper is a neural network that approaches human-level robustness and accuracy on speech recognition.

Since Whisper is fully Open Sourced you can run your own implementation (assuming you have enough compute power) and their project is listed on GitHub. This is something that could be achieved using cnvrg.io’s Metacloud managed MLOps platform where you can connect any compute that fits your use case, whether it be connecting Intel Developer Cloud resources or any other cloud provider resource.

Using the Whisper Model in our cnvrg.io Workspace – we add an if statement to our code to translate the video if it is not already in English. If the video is already in English, it will transcribe the video to raw text.

if not_english:

# translation to english

with open(audio_file, 'rb') as f:

print('Starting translating to English ...', end='')

transcript = openai.Audio.translate('whisper-1', f)

print('Done!')

else: # transcription

with open(audio_file, 'rb') as f:

print('Starting transcribing ... ', end='')

transcript = openai.Audio.transcribe('whisper-1', f)

print('Done!')

3. Prompt Engineering for Summarizing the video using an LLM

Now that we have a raw-text output from the video using Whisper – the next step is to summarize the text using a Large Language Model. This example uses a prompt with the Chat GPT 3.5 turbo model, however you could also use an LLM of your choice such as Hugging Face Summarization.

In our example we designed a prompt asking the LLM for a Title, Introduction Paragraph, Summary with bullet points, and Conclusion from the transcript output of Whisper.

system_prompt = 'I want you to act as a Life Coach.'

prompt = f'''Create a summary of the following text.

Text: {transcript}

Add a title to the summary.

Your summary should be informative and factual, covering the most important aspects of the topic.

Start your summary with an INTRODUCTION PARAGRAPH that gives an overview of the topic FOLLOWED

by BULLET POINTS if possible AND end the summary with a CONCLUSION PHRASE.'''

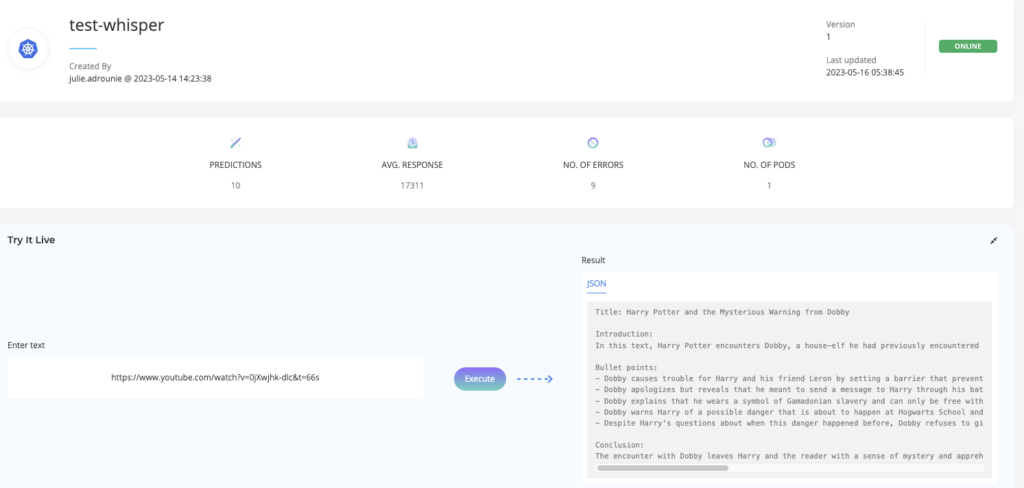

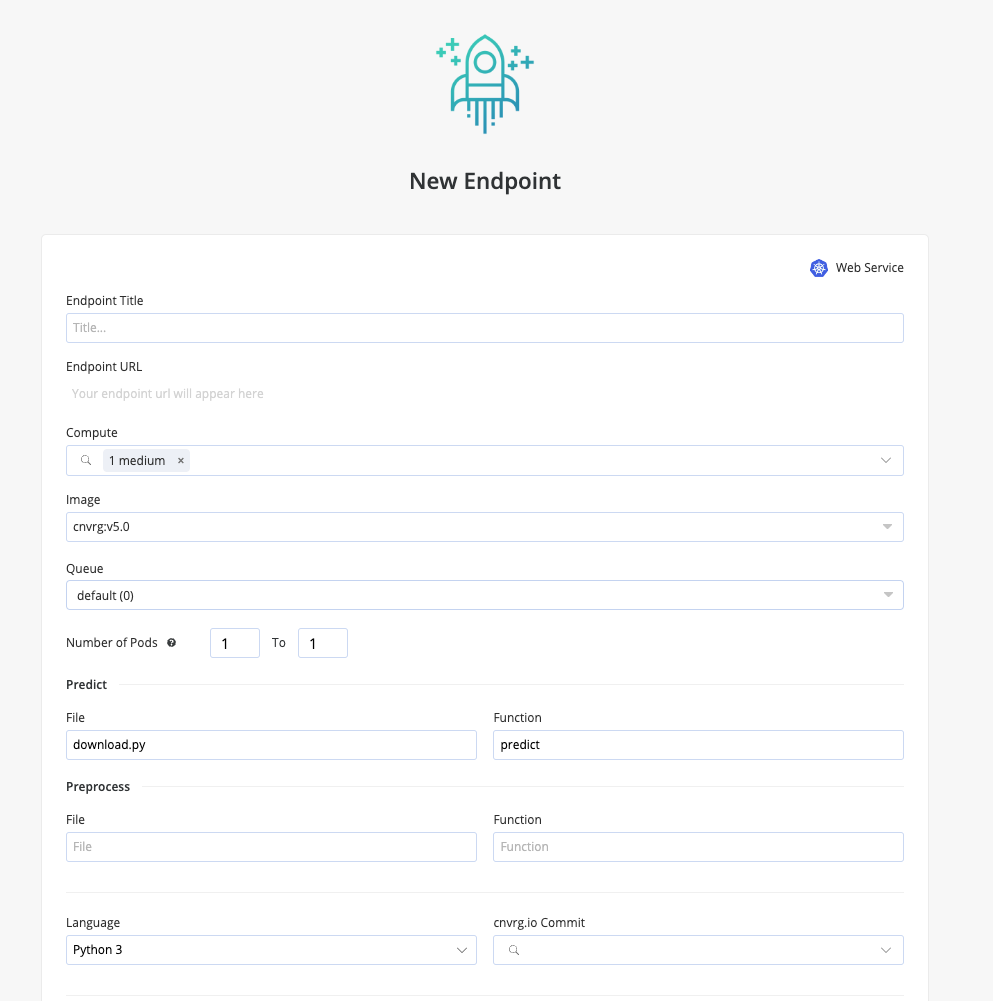

4. How to Deploy your LLM to Production Using cnvrg.io on Intel Developer Cloud resources

Once our code is working we can create a function that combines all of these steps together. In our example we have created a download.py file that has a “predict” function that will input our YouTube link and run the Whisper and LLM functions when it is called.

Next, you can create a cnvrg.io Endpoint to serve this model on Intel Developer Cloud resources as a lightweight REST API service which can easily be queried through the web.

The cnvrg.io software sets up the network requirements and deploys the service to Kubernetes, allowing autoscaling to be leveraged, ensuring all incoming traffic can be accommodated, and adding an authentication token to secure access. Additionally, cnvrg.io provides an entire suite of monitoring tools to simplify the process of managing a web service and its performance.

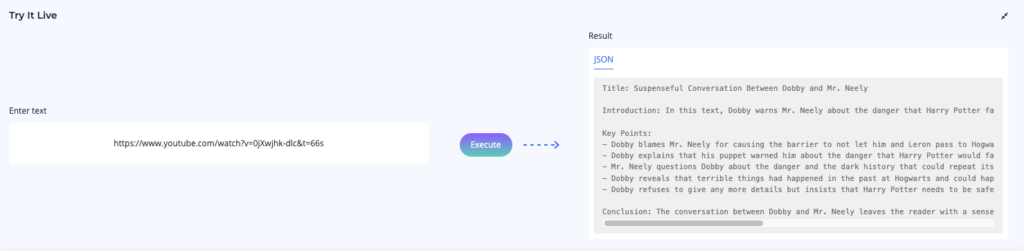

In a few seconds our endpoint will be fully deployed and can be used in our downstream applications!

To test our example I used a Clip of Harry Potter that was in Hebrew. The end result provided our Summarization in English using the Whisper translation functionality, even though the input was not in English.

5. What next? How to Add your Own Dataset and Set Up Feedback Loop for Continual Learning

Now that we have successfully created a YouTube to Text Summarizer on cnvrg.io – there are additional tools you can use to help manage this model in a production scenario.

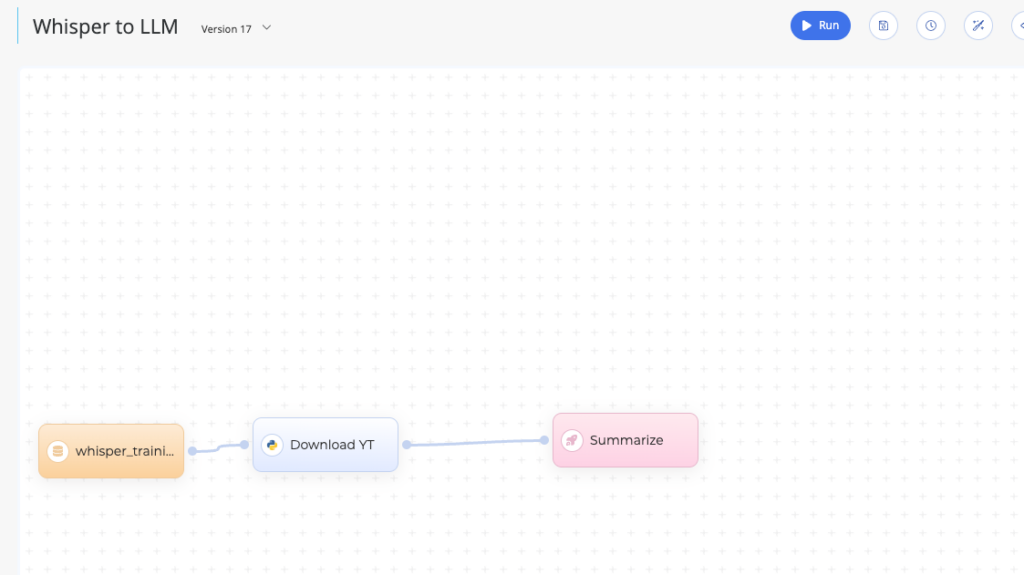

- Using Flows – you can connect this process to your own dataset for training and auto deploy to your cnvrg.io endpoint using a Deployment Task in our Drag-and-Drop pipeline Tool.

2. Using Feedback Loops

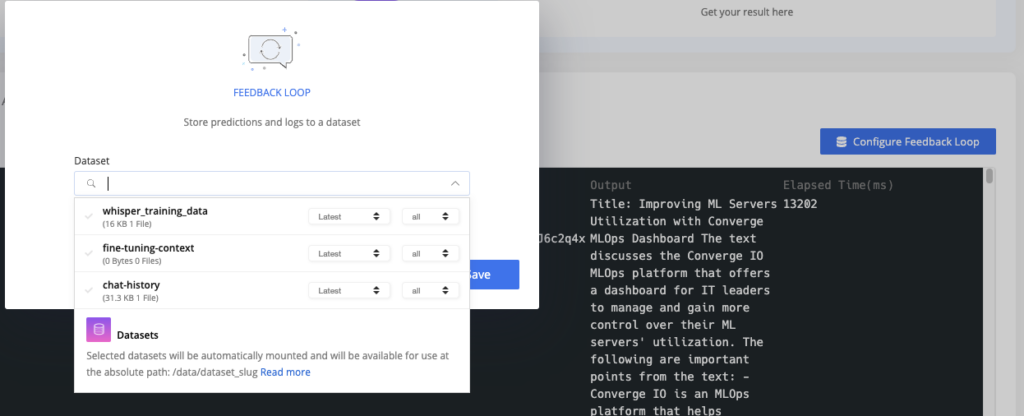

Additionally, endpoints offer the ability to send stored predictions to a dataset using Feedback Loops. Feedback Loops allow you to manually or automatically export the data (inputs, predictions and so on) from the endpoint into a cnvrg.io dataset.

Conclusion

The new AI tools are emerging fast with powerful models at our fingertips such as Whisper, ChatGPT and other LLMs. This comprehensive guide has demonstrated how businesses and individuals can easily build an efficient AI pipeline for transcribing, translating, and summarizing video content. Combining these models into a pipeline will allow us to productionize, personalize, and scale our models in production using cnvrg.io and Intel Developer Cloud.

Whisper, an open-source neural network, serves as a robust foundation for transcribing and translating videos, while LLMs like ChatGPT excel at generating human-like text summaries. By utilizing cnvrg.io’s user-friendly interface and machine learning platform, businesses and individuals can easily integrate these technologies into their workflows, regardless of coding experience. The seamless integration of Whisper and ChatGPT with cnvrg.io’s infrastructure ensures a smooth and efficient video summarization process.

In summary, the convergence of AI technologies, open-source models, and the capabilities of cnvrg.io empowers businesses and individuals to automate video transcription, translation, and summarization processes effectively. By harnessing the power of Whisper, ChatGPT, and cnvrg.io, organizations can unlock new levels of accessibility, efficiency, and innovation in video summarization, ultimately driving productivity and enhancing their workflows.